Deepseek Smackdown!

페이지 정보

작성자 Chas 댓글 0건 조회 86회 작성일 25-02-07 21:52본문

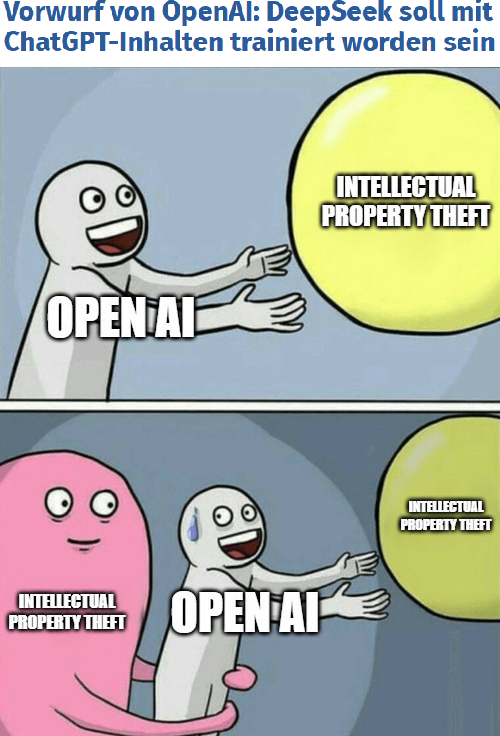

Still, some industry gamers view the DeepSeek announcement as a chance reasonably than a menace. While this method might change at any second, basically, DeepSeek has put a robust AI model within the palms of anyone - a possible menace to nationwide safety and elsewhere. The potential data breach raises critical questions on the security and integrity of AI information sharing practices. As AI technologies turn into increasingly highly effective and pervasive, the protection of proprietary algorithms and coaching knowledge turns into paramount. DeepSeek’s method used novel ways to slash the info processing requirements needed for training AI fashions by leveraging strategies comparable to Mixture of Experts, or MoE. The probe surrounds a look into the improperly acquired information from OpenAI's expertise. These APIs enable software builders to integrate OpenAI's sophisticated AI fashions into their own purposes, supplied they have the suitable license in the form of a professional subscription of $200 per 30 days. The size of information exfiltration raised red flags, prompting concerns about unauthorized access and potential misuse of OpenAI's proprietary AI models. Unsurprisingly, many users have flocked to DeepSeek to access superior fashions free of charge. Despite these points, current customers continued to have entry to the service.

Still, some industry gamers view the DeepSeek announcement as a chance reasonably than a menace. While this method might change at any second, basically, DeepSeek has put a robust AI model within the palms of anyone - a possible menace to nationwide safety and elsewhere. The potential data breach raises critical questions on the security and integrity of AI information sharing practices. As AI technologies turn into increasingly highly effective and pervasive, the protection of proprietary algorithms and coaching knowledge turns into paramount. DeepSeek’s method used novel ways to slash the info processing requirements needed for training AI fashions by leveraging strategies comparable to Mixture of Experts, or MoE. The probe surrounds a look into the improperly acquired information from OpenAI's expertise. These APIs enable software builders to integrate OpenAI's sophisticated AI fashions into their own purposes, supplied they have the suitable license in the form of a professional subscription of $200 per 30 days. The size of information exfiltration raised red flags, prompting concerns about unauthorized access and potential misuse of OpenAI's proprietary AI models. Unsurprisingly, many users have flocked to DeepSeek to access superior fashions free of charge. Despite these points, current customers continued to have entry to the service.

DeepSeek's developments have caused vital disruptions in the AI business, resulting in substantial market reactions. Investors fear DeepSeek’s advancements may slash demand for top-efficiency chips, cut back power consumption projections, and jeopardize the huge capital investments-totaling a whole lot of billions of dollars-already poured into AI mannequin development. As these fashions gain widespread adoption, the ability to subtly shape or limit information through model design becomes a crucial concern. While fashions like ChatGPT do well with pre-trained answers and prolonged dialogues, Deepseek thrives below strain, adapting in actual time to new data streams. If you're in search of an alternative to ChatGPT on your mobile phone, DeepSeek APK is an excellent possibility. This innovation impacts all participants in the AI arms race, disrupting key players from chip giants like Nvidia to AI leaders equivalent to OpenAI and its ChatGPT. However, questions remain over DeepSeek’s methodologies for coaching its fashions, notably regarding the specifics of chip usage, the precise cost of model improvement (DeepSeek claims to have skilled R1 for lower than $6 million), and the sources of its mannequin outputs. When engaged on important stuff, cross-reference its answers with other sources. Some sources have noticed the official API version of DeepSeek's R1 mannequin uses censorship mechanisms for matters thought of politically delicate by the Chinese government.

Additionally, there are fears that the AI system could possibly be used for overseas affect operations, spreading disinformation, surveillance, and the event of cyberweapons for the Chinese authorities. Create an API key for the system user. The modular design allows the system to scale effectively, adapting to numerous applications without compromising efficiency. China’s DeepSeek exemplifies this with its latest R1 open-source artificial intelligence reasoning mannequin, a breakthrough that claims to deliver performance on par with U.S.-backed models like Chat GPT at a fraction of the price. The unveiling of DeepSeek’s V3 AI model, developed at a fraction of the cost of its U.S. Nvidia itself acknowledged DeepSeek's achievement, emphasizing that it aligns with U.S. DeepSeek appears to lack a enterprise mannequin that aligns with its ambitious objectives. We additionally learned that for this job, mannequin dimension issues greater than quantization level, with bigger however extra quantized models virtually always beating smaller but much less quantized alternate options. This has allowed DeepSeek to create smaller and more efficient AI models which might be sooner and use less power. To use Ollama and Continue as a Copilot alternative, we'll create a Golang CLI app. To use torch.compile in SGLang, add --allow-torch-compile when launching the server.

We turn on torch.compile for batch sizes 1 to 32, where we observed probably the most acceleration. Such exceptions require the first possibility (catching the exception and passing) for the reason that exception is a part of the API’s behavior. Provide a failing check by simply triggering the path with the exception. By defying standard knowledge, DeepSeek has shaken the trade, triggering a sharp selloff in AI-associated stocks. Модель R-1 от DeepSeek AI в последние несколько дней попала в заголовки мировых СМИ. "The DeepSeek AI mannequin rollout is leading buyers to query the lead that US companies have and the way much is being spent and whether that spending will lead to profits (or overspending)," stated Keith Lerner, analyst at Truist. While I missed a couple of of these for truly crazily busy weeks at work, it’s still a distinct segment that nobody else is filling, so I will continue it. That all being said, LLMs are still struggling to monetize (relative to their cost of both coaching and running).

If you loved this report and you would like to get a lot more details regarding شات ديب سيك kindly take a look at our own web site.